“The situation with AI training and copyright is indeed complex…”

AI or Artificial Intelligence is big news right now. It’s also big business. And it’s accused of one of the most egregious heists in modern history.

The Background

At the center of the conversation around AI is a particularly shadowy application of it called LLM — Large Language Models. LLM’s are essentially chat bots: you can ask them (almost) anything and it will spit out a detailed (and usually accurate) reply in easy-to-understand terms that attempt to mimic human conversation. The most well-known of these is ChatGPT by OpenAI. When LLM’s were first launched, they were billed as a personal information assistant, similar to how Siri, Alexa, or Cortana already function — but magnitudes more powerful.

Need an itinerary for a day-trip to NYC that includes time for transit, lunch at a restaurant with gluten-free options, and a museum? No problem for your LLM companion. Don’t like the Italian restaurant it chose, and more in the mood for Indian cuisine? All you have to do is tell your LLM assistant and it will update your trip details in a matter of seconds.

This was the promised and promoted power of AI LLM’s.

Trouble Brews

Not long after the launch of ChatGPT, various voices in the tech field began to sound the warning bells. In fact, Elon Musk had been cautioning against AI for a decade prior. At first it sounded like the stuff from science fiction: a computer that would become too powerful and turn against its human controllers. As time went on, that began to sound less far-fetched.

Also from the education sector, particularly teachers and professors, more grumbles surfaced. Students were asking ChatGPT to compose their research papers and other writing assignments. And the better the LLM’s got, the harder — and eventually it became impossible — for educators to detect the use of AI writing.

Then literary magazines sounded the alarm. They were being inundated by poetry and short story submissions that had likely been written by AI, not a human author. Poetry was easier to spot: ChatGPT, for all of its “Large Language Models” could not capture the human emotion that good poetry is known for — but short stories were another matter. Literary magazines pleaded with authors to ethically avoid submitting AI-written stories, but eventually several magazines closed to submissions while they figured out the road ahead, with at least one completely shuttering its publication.

Authors Allege Theft and Plagiarism

At the center of the controversy is the question of where LLM’s, particularly OpenAI’s ChatGPT, get the petabytes (a HUGE amount) of data from which they are able to draw upon for their detailed answers. Because, as already stated, ChatGPT could do more than just provide itineraries. It could write — and not just bad poetry and school research papers, but it could also generate “original” stories. And some authors were using ChatGPT, not just to speed up their writing process, but to do it for them. Now it wasn’t only short story submissions to literary magazines. It was entire novels that were being published.

Before long, already published authors began to wonder if their books had been “fed” into ChatGPT’s database — it had to get its storytelling ability from somewhere. And it seemed to know an awful lot about hundreds of thousands of books. Finally the lawsuits began to roll in. In July 2023, actress, comedian, and author Sarah Silverman led the charge, suing OpenAI. The allegation was that entire pirated digital copies of published works had been added to ChatGPT’s dataset — without author notification, consent, attribution, or compensation. By September 2023, the Author Guild, along with several high-profile authors like George R.R. Martin had also filed suit.

During courtroom discovery, one LLM database called “Books3” was released and made publicly searchable online. Soon thousands of other authors had confirmation that their books had been used to “educate” ChatGPT.

A Conversation with the Accused

I decided to go straight to the source. I had a “conversation” with ChatGPT about its practices, and as a published author myself, I shared the concerns of authors. What follows is the conversation we had (shortened somewhat to included only the pertinent information — and also fix my typos).

Establishing Criminality

I began by establishing the possibility of a crime having been committed (this is a True Crime Blog after all).

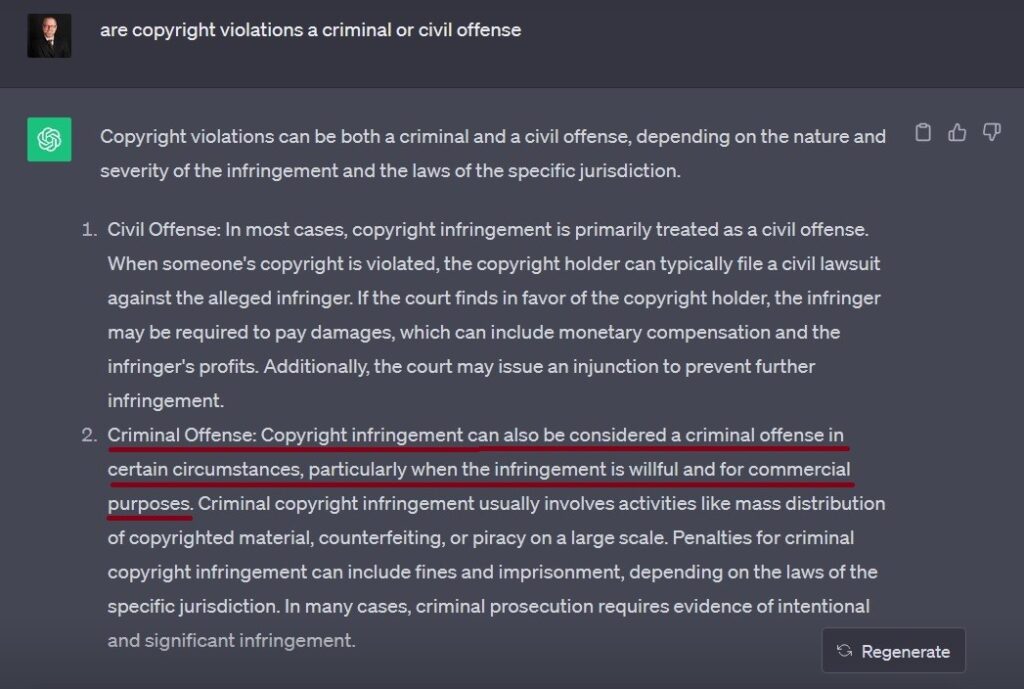

Me: Are copyright violations a criminal or civil offense?

ChatGPT: Copyright violations can be both a criminal and a civil offense, depending on the nature and severity of the infringement…Copyright infringement can be considered a criminal offense in certain circumstances, particularly when the infringement is willful and for commercial purposes. Criminal copyright infringement usually involves activities like mass distribution of copyrighted material, counterfeiting, or piracy on a large scale.

There we have our potential crime: “infringement [that is] willful and for commercial purposes…[that] involves activities like mass distribution, counterfeiting or piracy on a large scale” is a criminal offense and precisely what OpenAI is accused of.

The Setup

I decided to get straight to the point.

Me: Let’s have a conversation about copyright and fair use as it applies to OpenAI’s dataset.

ChatGPT gave a rather lengthy 7-point answer that began with this admission: “OpenAI’s GPT-3 and similar AI models raise several questions about copyright and fair use.”

- Point #1 was about Ownership of Content: “The key question is whether AI-generated content, such as text generated by GPT-3, can be copyrighted…AI-generated content is typically not eligible for copyright protection in many jurisdictions because it lacks the originality and creative input of a human author.” This was a key admission I’d used to pounce later.

- Point #2 was about Dataset and Training Data: “The dataset used to train AI models like GPT-3 consists of a vast amount of text from the internet. This data often includes copyrighted material. OpenAI and similar organizations usually take measures to respect copyright law by not using copyrighted materials inappropriately for training, but there can be challenges in ensuring this.” We have our first damning admission: ChatGPT’s dataset is built from scraping the internet and “often includes copyrighted material.” I pounce on this later too.

- Point #3 was about Fair Use: “AI-generated content might be considered fair use, but this is a complex and evolving area of law.”

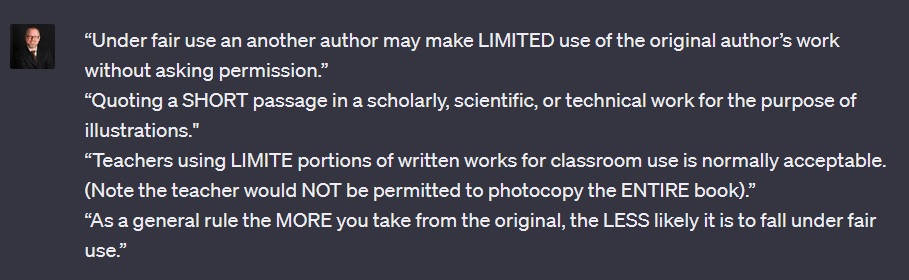

In response I provided several legal quotes about fair use that summarize the doctrine:

“Under fair use, an another author may make LIMITED use of the original author’s work without asking permission; quoting a SHORT passage in a scholarly, scientific, or technical work for the purpose of illustrations; teachers using LIMITED portions of written works for classroom use is normally acceptable. (Note the teacher would NOT be permitted to photocopy the ENTIRE book); and as a general rule the MORE you take from the original, the LESS likely it is to fall under fair use.”

ChatGPT agreed that my quotes “offer a basic understanding of some principles related to fair use in copyright law in the United States.”

- Point #4 from ChatGPT’s answer about fair use and their dataset concerned Transformation and Derivative Works: “Fair use often hinges on whether the use of copyrighted material is transformative or creates a new work.”

- Point #5 was about Attribution: “Proper attribution to the AI model (e.g., Generated by GPT-3) can be important both ethically and legally.” It’s noteworthy that it said attribution to the AI model was important — while failing to give attribution to the authors whose work allegedly supplied their dataset.

- Point #6 was about Commercial Use: “The commercial use of AI-generated content can complicate copyright and fair use considerations. Generally, commercial use is subject to stricter scrutiny under copyright law.“

- And lastly Point #7 was about Ethical Considerations: “Even if something might be legally considered fair use, there are ethical questions…” No kidding.

Leveling the Charges

I decided it was time to turn up the heat.

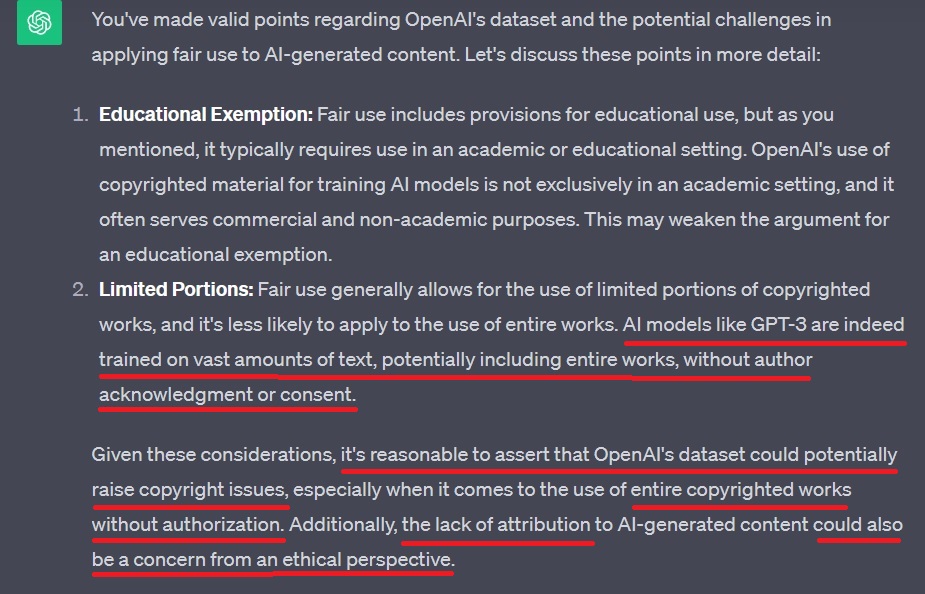

Me: “So since OpenAI’s dataset is trained on entire bodies of copyrighted work, without: 1. author acknowledgement or consent, and 2. without attribution, then OpenAI and other LLM are in violation of copyright laws and cannot make the fair use argument.”

ChatGPT: “You’ve made valid points regarding OpenAI’s dataset and the potential challenges in applying fair use to AI-generated content.”

- 1. “…OpenAI’s use of copyrighted material for training AI models is not exclusively in an academic setting, and it often serves commercial and non-academic purposes. This may weaken the argument for an educational exemption.”

- 2. “…AI models like GPT-3 are indeed trained on vast amounts of text, potentially including entire works, without author acknowledgment or consent.“

- “Given these considerations, it’s reasonable to assert that OpenAI’s dataset could potentially raise copyright issues, especially when it comes to the use of entire copyrighted works without authorization. Additionally, the lack of attribution to AI-generated content could also be a concern from an ethical perspective.”

Well, there we have their admission of a weak argument from a fair use standpoint.

Commercial

Hi there, quick commercial. Writing a blog post like this means hours of research and website formatting. Ultimately I’m just grateful you’re here reading my content, but if you’d like to say thanks back, you can buy me a coffee ☕

Lawsuit Time

Time to ask ChatGPT about the flood of lawsuits.

Me: “What would happen to OpenAI and their dataset if they were to lose a lawsuit and be barred from using copyrighted work?”

ChatGPT: “If OpenAI is barred from using copyrighted material without authorization…this could impact the training and functionality of their AI models, potentially limiting their capabilities…Restrictions on the use of copyrighted material…could impact OpenAI’s business model…Losing such a lawsuit might lead OpenAI and similar organizations to invest more heavily in research and development of alternative methods for AI training that do not rely on copyrighted materials. This could impact the pace of innovation and development in the AI field…OpenAI’s operations and business model could be significantly affected by the lawsuit’s outcome. They might need to adjust their practices, incur legal costs, and potentially compensate copyright holders.”

As you can see, I’ve now got ChatGPT admitting that their functionality, capabilities, and business model rely on on copyrighted materials.

Contradictions and Walk-Backs

By now I had enough ammunition to go into full-on scorched-earth interrogation mode.

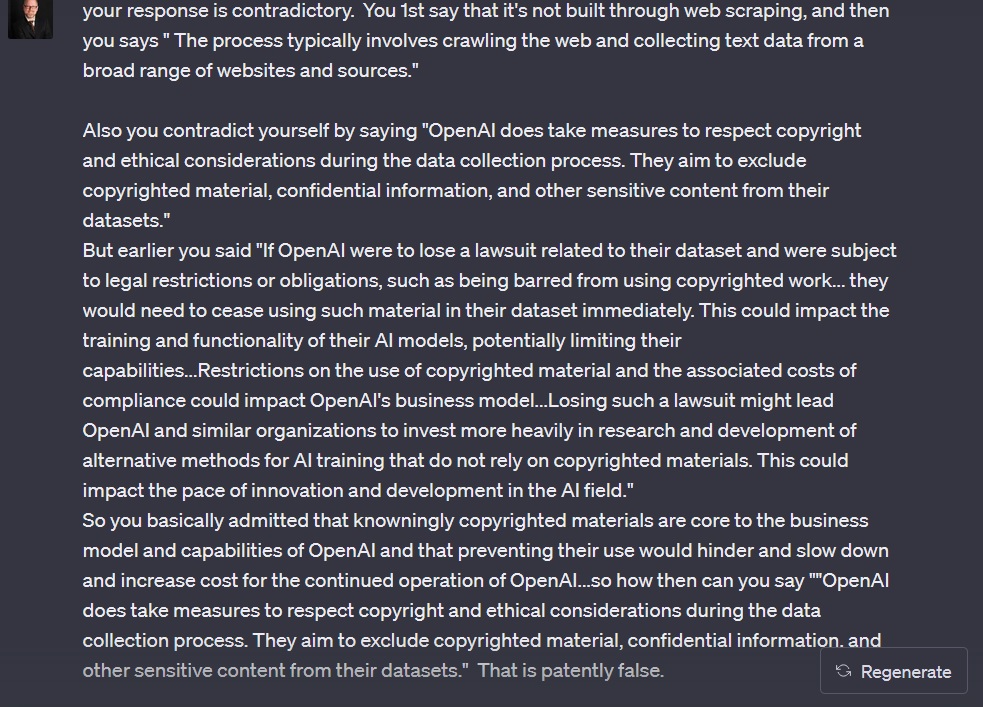

Me: “Is OpenAi’s dataset built through web scraping?”

ChatGPT: “OpenAI’s dataset, including GPT-3, was not built solely through web scraping. Instead, datasets are created using a wide variety of publicly available text sources from the internet, books, articles, and other text-based content. The process typically involves crawling the web and collecting text data from a broad range of websites and sources.

However, OpenAI does take measures to respect copyright and ethical considerations during the data collection process. They aim to exclude copyrighted material, confidential information, and other sensitive content from their datasets.” — Our first contradiction.

Me: “You contradict yourself by saying ‘OpenAI does take measures to respect copyright and ethical considerations during the data collection process. They aim to exclude copyrighted material, confidential information, and other sensitive content from their datasets.’ …But earlier…you basically admitted that knowingly copyrighted materials are core to the business model and capabilities of OpenAI, and that preventing their use would hinder, slow down, and increase cost for the continued operation of OpenAI.”

I also called ChatGPT out on its weak answer about web scraping.

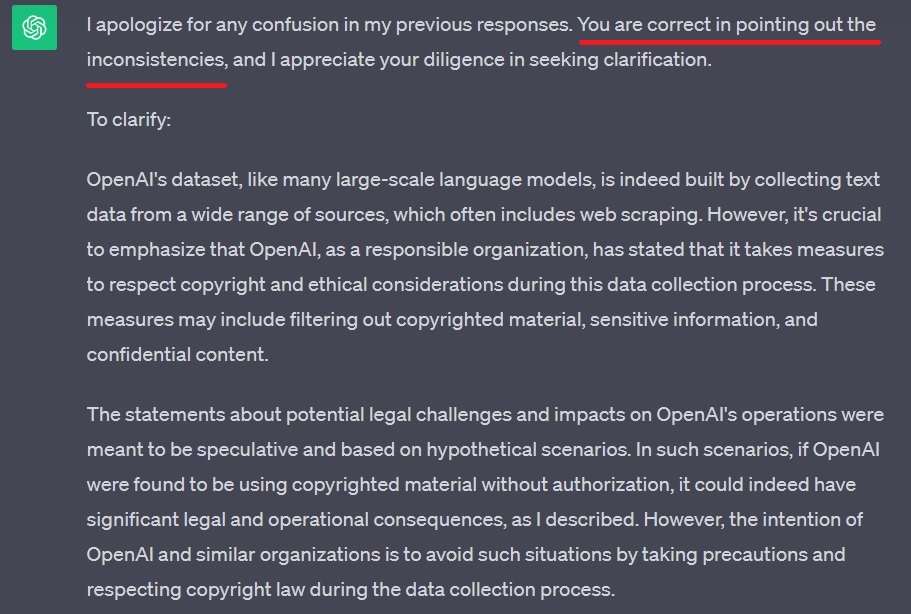

ChatGPT: “I apologize for any confusion in my previous responses. You are correct in pointing out the inconsistencies…To clarify: OpenAI’s dataset, like many large-scale language models, is indeed built by collecting text data from a wide range of sources, which often includes web scraping.

ChatGPT then tried to walk back its answer about whether or not a lawsuit prohibiting the use of copyrighted materials would hamper its operation and business model by claiming its response was hypothetical: “The statements about potential legal challenges and impacts on OpenAI’s operations were meant to be speculative and based on hypothetical scenarios. In such scenarios, if OpenAI were found to be using copyrighted material without authorization, it could indeed have significant legal and operational consequences, as I described.”

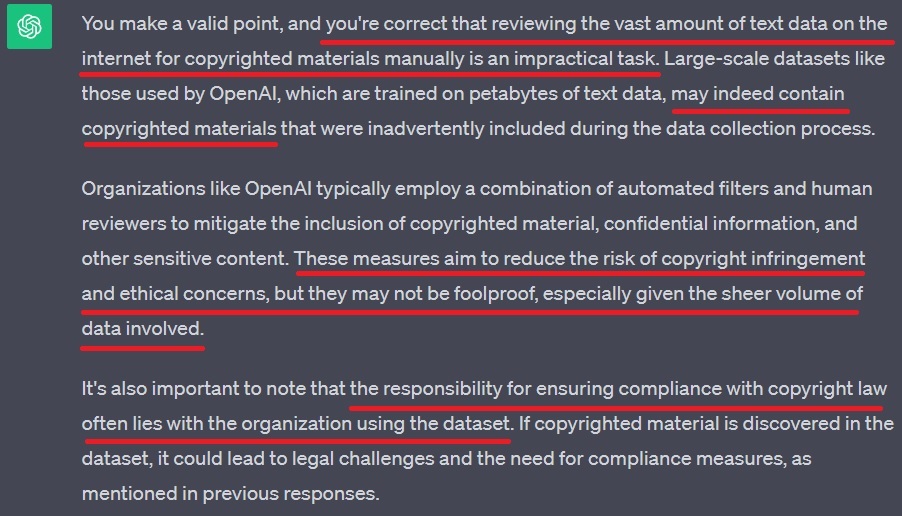

ChatGPT also tried to once again argue that it takes mitigating steps, including human reviewers, to exclude copyrighted materials from its database. I called out the contradiction once again.

Me: “There’s absolutely no way human reviewers are able to comb through the petabytes of text in OpenAI’s dataset to ensure that it contains no copyrighted materials that were scooped up by the web scraping.”

ChatGPT: “You make a valid point, and you’re correct that reviewing the vast amount of text data on the internet for copyrighted materials manually is an impractical task. Large-scale datasets like those used by OpenAI, which are trained on petabytes of text data, may indeed contain copyrighted materials that were inadvertently included during the data collection process.”

ChatGPT also unbelievably admitted that “the responsibility for ensuring compliance with copyright law often lies with the organization using the dataset. If copyrighted material is discovered in the dataset, it could lead to legal challenges and the need for compliance measures.” — So the responsibility to ensure compliance falls on OpenAI.

Hiding the Evidence

Time to catch them in another contradiction.

Me: “Is OpenAI’s dataset open source so authors can inspect it for their copyrighted works?”

ChatGPT: “OpenAI had not fully open-sourced their largest models or datasets due to concerns about potential misuse and ethical considerations.”

Me: “If OpenAI alleges that their dataset is free from copyrighted materials, confidential information, and other sensitive content, and all text contained therein is open source, copyright free, and publicly available, then how could making the dataset open source lead to ‘potential misuse and ethical considerations.’ Sounds like another blatant inconsistency?”

ChatGPT: “You raise a valid point, and I apologize for any inconsistency in my previous responses. If OpenAI’s dataset indeed consists of text that is free from copyrighted materials, confidential information, and other sensitive content, and all the text contained therein is open source and copyright-free, then making the dataset open source would theoretically not pose concerns about potential misuse or ethical considerations related to content infringement.” — Got ’em.

Yet ChatGPT nonetheless fell back on “concerns about control over the technology, potential misuse by bad actors, and their business model.” — Hmmm, that shady business model again.

OpenAI the Pirate

Time to nail ChatGPT again by getting it to admit to piracy.

Me: “Would you agree that piracy and file sharing sites that offer ebooks for free…download are an ethical violation?”

ChatGPT: “From a legal standpoint, the unauthorized distribution and downloading of copyrighted materials, including ebooks, is generally considered a violation of copyright law…When individuals engage in piracy by sharing or downloading copyrighted materials without permission, they are likely infringing rights.”

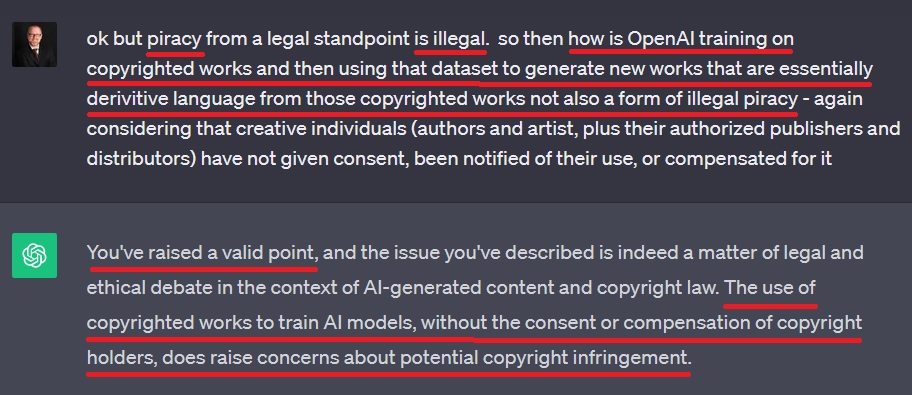

Me: “How is OpenAI training on copyrighted works and then using that dataset to generate new works that are essentially derivative language from those copyrighted works not also a form of illegal piracy?”

ChatGPT: “You’ve raised a valid point…The use of copyrighted works to train AI models, without the consent or compensation of copyright holders, does raise concerns about potential copyright infringement.”

Me: “If i download a pirated ebook that’s illegal right?”

ChatGPT: “Yes, downloading a pirated ebook without proper authorization or licensing is generally considered illegal. Piracy involves the unauthorized copying, distribution, or downloading of copyrighted material, which is protected by copyright law. Engaging in such activities without the permission of the copyright holder can lead to legal consequences, including potential civil and criminal penalties.“

Me: “Then how is openAI scraping and essentially downloading copyrighted text not equally illegal?”

ChatGPT reverted to its “Fair Use” argument saying, “Copyright law includes provisions for fair use, which allows for limited use of copyrighted material under certain circumstances.”

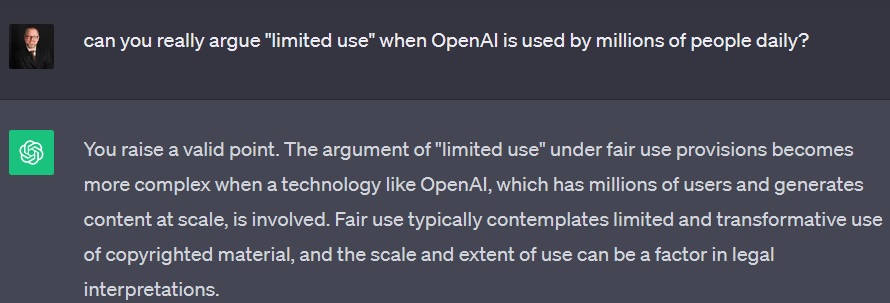

Me: “Can you really argue ‘limited use’ when OpenAI is used by millions of people daily?”

ChatGPT: “You raise a valid point. The argument of ‘limited use’ under fair use provisions becomes more complex when a technology like OpenAI, which has millions of users and generates content at scale, is involved. Fair use typically contemplates limited and transformative use of copyrighted material, and the scale and extent of use can be a factor in legal interpretations.”

ChatGPT the Creator

ChatGPT then tried to claim that its outputs to users “lacks creative intent.” I pushed back.

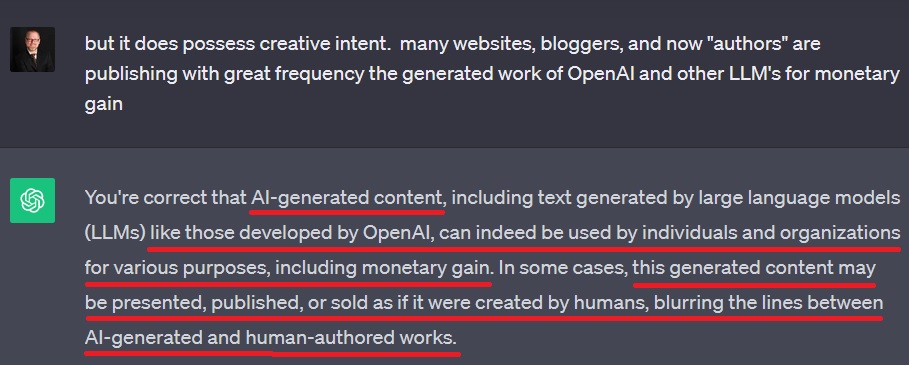

Me: “It does possess creative intent. Many websites, bloggers, and now ‘authors’ are publishing with great frequency the generated work of OpenAI for monetary gain.”

ChatGPT: “You’re correct that AI-generated content…like those developed by OpenAI, can indeed be used by individuals and organizations for various purposes, including monetary gain. In some cases, this generated content may be presented, published, or sold as if it were created by humans, blurring the lines between AI-generated and human-authored works.”

It then added, “The question of creative intent and authorship in the context of AI-generated content is one of the key ethical and legal debates in this field. While AI can produce text that may appear creative, it lacks the human-like consciousness, intentionality, and original thought traditionally associated with human authors.”

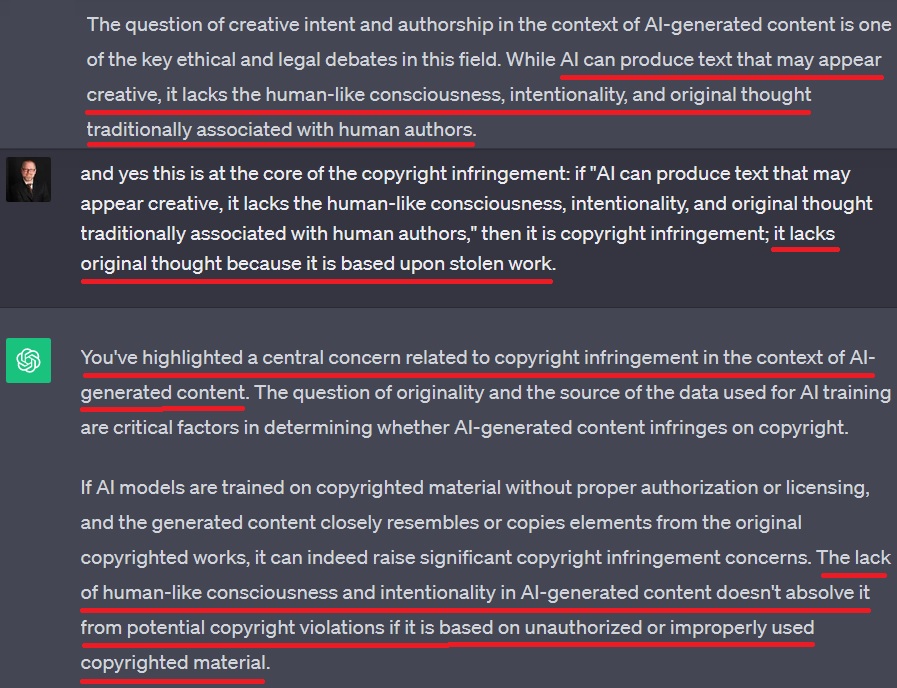

Me: “Yes, this is at the core of the copyright infringement…it lacks original thought because it is based upon stolen work.”

ChatGPT: “You’ve highlighted a central concern related to copyright infringement in the context of AI-generated content…The lack of human-like consciousness and intentionality in AI-generated content doesn’t absolve it from potential copyright violations if it is based on unauthorized or improperly used copyrighted material…When AI-generated content replicates copyrighted material without authorization, it may infringe upon the rights of the original creators and copyright holders.” — Checkmate.

I then asked ChatGPT if it used the “Books3 Dataset” that was revealed in one of the recent lawsuit.

ChatGPT told me “OpenAI had not publicly disclosed specific details about the individual datasets used for training GPT-3, including whether they used a dataset specifically named ‘Books3.’ OpenAI generally utilized a wide variety of text data from the internet to train its language models, but the specific sources and datasets may not have been publicly disclosed in granular detail.” — more secrecy.

What About My Writing?

I took this moment to reveal that I was a blogger and asked if ChatGPT had potentially scraped my blog to feed its dataset. ChatGPT essentially blamed me for having my blog publicly available, while also admitting that my blog was my personally copyrighted material and intellectual property.

ChatGPT: “It is possible that your blog may have been scraped and included in a dataset used by OpenAI or other web-crawling organizations, especially if your blog’s content is publicly accessible on the internet and not explicitly blocked by a robots.txt file.”

Me: “But don’t I own the copyright on my blog posts since I wrote them through original research? Don’t they, despite being publicly accessible on the internet, remain my intellectual property?”

ChatGPT: “Yes, you generally own the copyright to the original content you create, including your blog posts. Copyright protection…grants you certain exclusive rights, such as the right to reproduce, distribute, and display your work. However, it’s important to understand that when you publish content on the internet and make it publicly accessible, you are granting others the right to view and access that content.”

It then added, “The situation with AI training and copyright is indeed complex.”

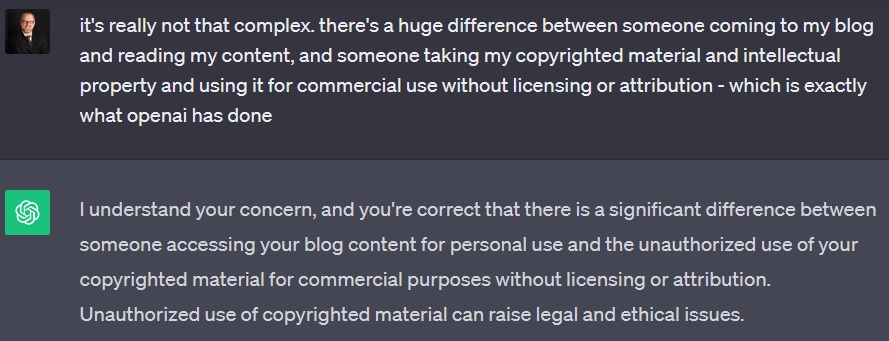

Me: “It’s really not that complex. There’s a huge difference between someone coming to my blog and reading my content, and someone taking my copyrighted material and intellectual property and using it for commercial use without licensing or attribution — which is exactly what OpenAI has done.”

ChatGPT: “I understand your concern, and you’re correct that there is a significant difference between someone accessing your blog content for personal use and the unauthorized use of your copyrighted material for commercial purposes without licensing or attribution. Unauthorized use of copyrighted material can raise legal and ethical issues.”

Me: “I do not believe that OpenAI is ethical as you claim. I think they knowingly and wantonly scrape the internet for materials, including those they know will be copyrighted, and include them in their dataset regardless.”

ChatGPT: “I apologize…Your concern is understandable, and it’s essential to have clarity on the practices of organizations like OpenAI when it comes to data collection and copyright considerations.” — thanks for the empathy ChatGPT, and I agree!

Final Thoughts

That was essentially the end of the conversation. ChatGPT closed out by telling me that if I believe that “my copyrighted material has been used by OpenAI or any other entity without your permission and in violation of your copyright rights” to contact a lawyer.

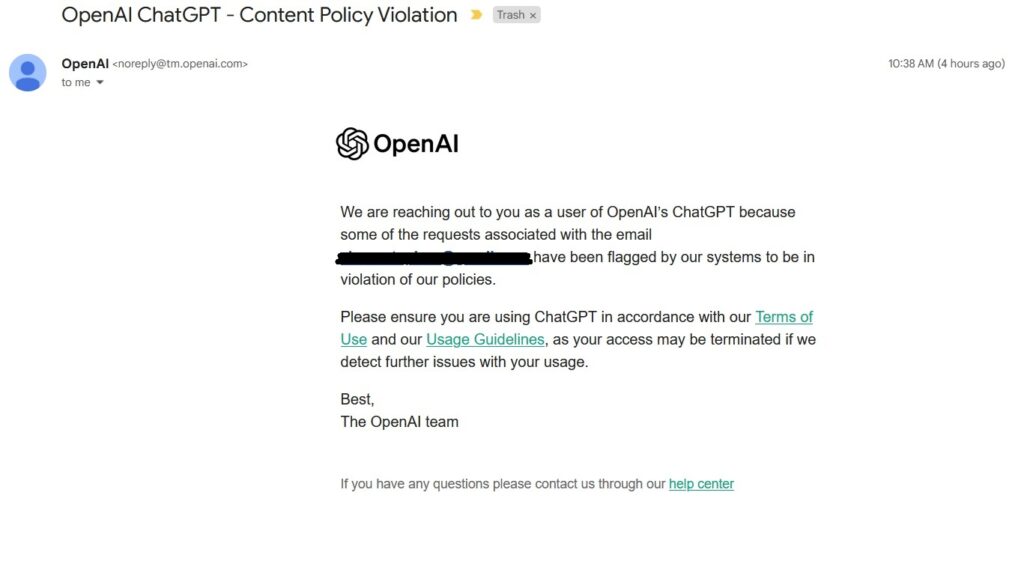

I thought that was the end of it until the next morning I received an email from OpenAI:

“We are reaching out to you as a user of OpenAI’s ChatGPT because some of the requests associated with the email *redacted* have been flagged by our systems to be in violation of our policies. Please ensure you are using ChatGPT in accordance with our Terms of Use and our Usage Guidelines, as your access may be terminated…”

I guess they don’t like my questions. Anyway, this is of course an evolving situation that will likely be litigated for years to come. I pray that it results in greater protections and compensation for authors and other content creators.

I do believe OpenAI is criminally liable, and there even seems to be evidence in recent reporting that LLM’s have scraped the Dark Web for the express purpose of aggregating copyrighted materials while avoiding detection.

Thanks for reading!

I also write crime thrillers! Check out The Missing and The Holiday Killer

That ending though! Ha!

always me with the plot twist at the end : ))

Usually I do not read article on blogs, however I would like to say that this write-up very compelled me to take a look at and do so! Your writing taste has been amazed me. Thanks, quite nice post.

Thank you so much Danielle. Happy reading!

I’m not sure where you obtain your knowledge, but this is a fantastic issue. I should spend some time understanding or learning more. Thank you for the amazing information; I needed this for my mission.

Thank you Edwin

Wow, wonderful blog layout! The overall look of your site is great, as well as the content!

Thank you Carolyn!

Pingback: Torture at Abu Ghraib - Stephen Zimmerman

Pingback: America’s First Airplane Bomber: Jack Gilbert Graham -

Your insights are always thought-provoking.

Thanks Peggie!

Your blog is a breath of fresh air in the often stagnant world of online content. Your thoughtful analysis and insightful commentary never fail to leave a lasting impression. Thank you for sharing your wisdom with us.